Isuru Samaratunga, Resarch Manager

Digital technologies such as artificial intelligence (AI) are increasingly gaining potential to become integrated into the workplace. The recent release of ChatGPT has opened up many such possibilities, with users rushing to try it out for purposes ranging from productivity-enhancing, to the bizarre. The chatbot is an NLP, or natural language processing tool which allows users to interact with it in a ‘conversational’ manner, to answer questions, follow instructions, and so on. AI tools such as ChatGPT put to use in the workplace can certainly bring in new efficiencies, reducing demands on workers’ time spent on mundane tasks, freeing up time for other kinds of work. But how do tools like ChatGPT fair on more complex tasks such as analysis of interview transcripts? As a qualitative researcher, I decided to put ChatGPT to test.

I tested ChatGPT’s[i] qualitative data analysis capabilities by instructing[ii] it to analyze an interview English language transcript from an in-depth one-hour interview conducted recently by LIRNEasia. The one-hour interview a part of a study to understand empowerment impacts of digitally-enabled work for women in Sri Lanka and India.[iii]

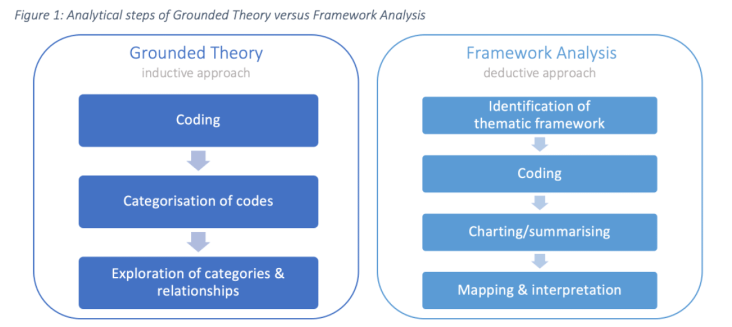

Qualitative interview transcripts can be analyzed using different techniques. Two common approaches are Grounded Theory and Framework Analysis, both of which I use when analyzing qualitative data . Grounded Theory takes more of a bottoms-up (inductive) approach, allowing the categories and relationships between them in the data to emerge through the analysis. Framework analysis on the other hand, takes more of a deductive approach, starting with a thematic framework in mind, and then tries to systematically analyze the data by themes (Figure 1).

Qualitative interview transcripts can be analyzed using different techniques. Two common approaches are Grounded Theory and Framework Analysis, both of which I use when analyzing qualitative data . Grounded Theory takes more of a bottoms-up (inductive) approach, allowing the categories and relationships between them in the data to emerge through the analysis. Framework analysis on the other hand, takes more of a deductive approach, starting with a thematic framework in mind, and then tries to systematically analyze the data by themes (Figure 1).

The transcript had already been analyzed manually as well as using ATLAS.ti[iv] –a computer assisted qualitative data analysis software (CAQDAS) package– previously using a mix of the inductive and deductive approaches. For comparison, I instructed ChatGPT to analyze the same transcript according to both inductive and deductive approaches. Here is what I learnt.

1. ChatGPT memory is too short to code one-hour interview transcript

ChatGPT can only analyze transcripts with ~3200 words in one attempt. The original transcript had just over 8000 words (nearly one hour in interview time). I fed the transcript in three pieces and instructed ChatGPT to consider three parts as one transcript. ChatGPT was not able to combine the partial transcripts, and on some occasions, it combined them with unrelated transcripts (from an entirely unknown source!) and proceeded with the analysis. Without long term memory, ChatGPT ‘forgets’ what it is instructed, making it something like working with a person with amnesia. As a work-around, to keep the number of words within the ChatGPT limit, I deleted certain sections and words from the transcript: informed consent section, icebreaker questions, all questions asked by the moderator, filler words such as ‘mm,’ ‘ah,’ ‘you know…’ Once the data had been ‘prepared’ in this way, the bot was able to crunch the entire transcript in one go (the results are discussed later in this blog).

2. ChatGPT performs better with a deductive approach to coding than with an inductive approach

I tested out a deductive approach by feeding ChatGPT with a predefined code list, which was developed for the larger research project. The code list was developed manually with the help of preliminary insights generated through ATLAS.ti. ChatGPT performed relatively well in development of codes based on actual words of the participants (in-vivo codes) such as ‘parallel jobs.’ It did not perform as well with open codes, which require sentiment analysis and capturing nuances in the transcript. While software like ATLAS.ti can was able to perform sentiment analysis on the transcript, capturing nuances still requires human intervention.

To test ChatGPT’s inductive qualitative coding abilities, I instructed it to create its own list of codes based on the transcript. The result was an extensive code list with around 20 codes—a large number for a single transcript. To streamline the codes, I then fed in the research objectives and instructed the bot to consider them when creating codes. ChatGPT followed the instructions and provided a refined, more focused list of codes. However, once again, its ‘short-term’ memory becomes a hurdle, preventing it from being able to follow sequential steps in the instructions provided for the inductive approach.

3. ChatGPT is limited by a binary approach to developing code categories

ChatGPT can perform the categorization of codes in Grounded Theory analysis to certain extent. Codes are closer to the data (the transcript) while categories are much more abstract; categorization requires understanding the dis/similarities among codes and un/grouping them accordingly. Creating categories thus requires human intervention. On the upside, ChatGPT was able to categorize about 20 codes into six categories which represented the findings of the transcript reasonably well. However on the downside, it was not able to allow for the same code possibly falling under two categories (which is obviously possible in manual categorization). Figure 2 compares software-assisted manually created code groups (categories) against some of ChatGPT created categories. Certain codes such as ‘flexibility advantages’, ‘flexibility disadvantages’ were included under multiple categories in the manual analysis, but ChatGPT did not allow for such flexibility.

4. ChatGPT can portray interviewee’s real-world situations

4. ChatGPT can portray interviewee’s real-world situations

Once codes and categories had been identified by ChatGPT, it was able to then identify relationships between categories (the third step of Grounded Theory analysis). ChatGPT was able to describe four sets of relationships between the categories and interestingly those scenarios are a fairly good portrayal of real-world situations (Figure 3). For instance, ChatGPT identified the relationship between the ‘daily routine’ and ‘balancing responsibilities’ categories and explained the relationship:

‘the speaker’s daily routine is largely focused on balancing her various responsibilities, [she] describes how [she] try to fit in part-time work, studying, and running a business into [her] day, and how this can be challenging at times’

In sum

Testing ChatGPT for qualitative data analysis reveals limits and potentials of the chatbot. ‘Short memory’ is the main limitation, which restricts the number of instructions you can give to get a meaningful outcome, so as of now analyzing multiple transcripts is a challenge for ChatGPT. It better performs in deductive approach coding and identifying relationships among categories, which requires human intervention when you analyze a transcript manually or using CAQDAS.

The ultimate question is can ChatGPT help us as qualitative researchers get through some of those mundane parts of our jobs which take up large amounts of time? and a better job at that? As these testing results have shown (Figure 4), the short answer is yes and no(t yet). Though a layer of human interpretation is still needed and the bot does have its inherent limitations, ChatGPT is still capable of doing a decent first attempt.

______________________________

[i] March 14 version: free research preview.

[ii] Some of the instructions given to ChatGPT were ‘I am doing a qualitative analysis on this transcript. Identify codes related to this transcript’, ‘What are the suitable codes to analyze this transcript?’, ‘I am giving you a code list to analyze a transcript. Use those codes to analyze this transcript’, ‘I am giving you three parts of a transcript. You have to consider all three parts as one transcript and code it’, ‘What are the emerging themes of the coding you did?’, ‘Categorize these codes based on main themes’, ‘What are the emerging themes of this transcript?’, ‘What do you have to say about this respondent?’

[iii] This respondent was a 24 year old and accounting graduate, with five years of professional experience. She began her career as a finance intern and then advanced to become a business designer. She works part-time on her own jewelry business.

[iv] ATLAS.ti version 9 for Mac, which (released in September 2020).

Comments are closed.